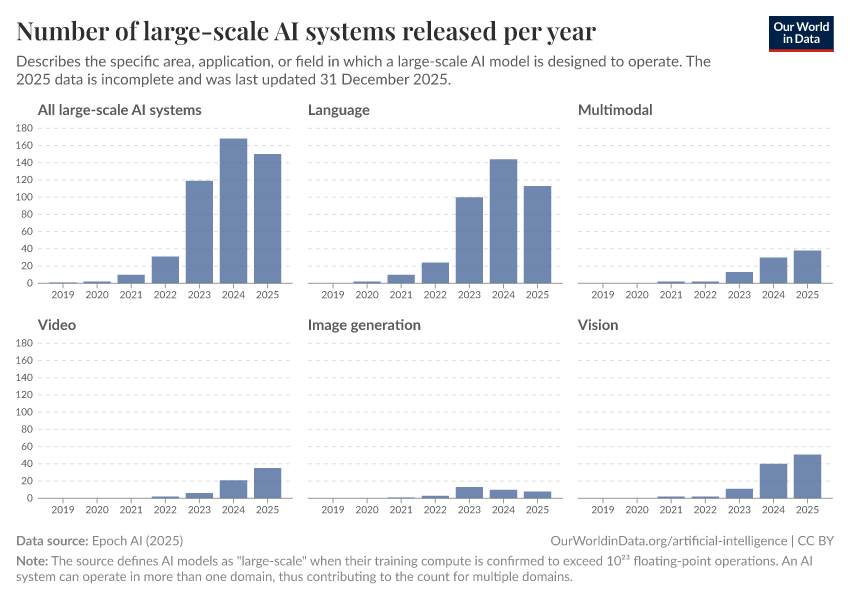

Number of large-scale AI systems released per year

What you should know about this indicator

- Game systems are specifically designed for games and excel in understanding and strategizing gameplay. For instance, AlphaGo, developed by DeepMind, defeated the world champion in the game of Go. Such systems use complex algorithms to compete effectively, even against skilled human players.

- Language systems are tailored to process language, focusing on understanding, translating, and interacting with human languages. Examples include chatbots, machine translation tools like Google Translate, and sentiment analysis algorithms that can detect emotions in text.

- Multimodal systems are artificial intelligence frameworks that integrate and interpret more than one type of data input, such as text, images, and audio. ChatGPT-4 is an example of a multimodal model, as it has the capability to process and generate responses based on both textual and visual inputs.

- Vision systems focus on processing visual information, playing a pivotal role in image recognition and related areas. For example, Facebook's photo tagging model uses vision AI to identify faces.

- Speech systems are dedicated to handling spoken language, serving as the backbone of voice assistants and similar applications. They recognize, interpret, and generate spoken language to interact with users.

- Biology systems analyze biological data and simulate biological processes, aiding in drug discovery and genetic research.

- Image generation systems create visual content from text descriptions or other inputs, used in graphic design and content creation.

More Data on Artificial Intelligence

Sources and processing

This data is based on the following sources

How we process data at Our World in Data

All data and visualizations on Our World in Data rely on data sourced from one or several original data providers. Preparing this original data involves several processing steps. Depending on the data, this can include standardizing country names and world region definitions, converting units, calculating derived indicators such as per capita measures, as well as adding or adapting metadata such as the name or the description given to an indicator.

At the link below you can find a detailed description of the structure of our data pipeline, including links to all the code used to prepare data across Our World in Data.

Notes on our processing step for this indicator

The count of large-scale AI models AI systems per domain is derived by tallying the instances of machine learning models classified under each domain category. It's important to note that a single machine learning model can fall under multiple domains. The classification into domains is determined by the specific area, application, or field that the AI model is primarily designed to operate within.

Reuse this work

Citations

How to cite this page

To cite this page overall, including any descriptions, FAQs or explanations of the data authored by Our World in Data, please use the following citation:

“Data Page: Number of large-scale AI systems released per year”, part of the following publication: Charlie Giattino, Edouard Mathieu, Veronika Samborska, and Max Roser (2023) - “Artificial Intelligence”. Data adapted from Epoch AI. Retrieved from https://archive.ourworldindata.org/20260308-063423/grapher/number-of-large-scale-ai-systems-released-per-year.html [online resource] (archived on March 8, 2026).How to cite this data

In-line citationIf you have limited space (e.g. in data visualizations), you can use this abbreviated in-line citation:

Epoch AI (2025) – with major processing by Our World in DataFull citation

Epoch AI (2025) – with major processing by Our World in Data. “Number of large-scale AI systems released per year” [dataset]. Epoch AI, “Tracking Compute-Intensive AI Models” [original data]. Retrieved April 1, 2026 from https://archive.ourworldindata.org/20260308-063423/grapher/number-of-large-scale-ai-systems-released-per-year.html (archived on March 8, 2026).Download

Quick download

Download the data shown in this chart as a ZIP file containing a CSV file, metadata in JSON format, and a README. The CSV file can be opened in Excel, Google Sheets, and other data analysis tools.

Data API

Use these URLs to programmatically access this chart's data and configure your requests with the options below. Our documentation provides more information on how to use the API, and you can find a few code examples below.

Data URL (CSV format)

https://ourworldindata.org/grapher/number-of-large-scale-ai-systems-released-per-year.csv?v=1&csvType=full&useColumnShortNames=falseMetadata URL (JSON format)

https://ourworldindata.org/grapher/number-of-large-scale-ai-systems-released-per-year.metadata.json?v=1&csvType=full&useColumnShortNames=falseExcel / Google Sheets

=IMPORTDATA("https://ourworldindata.org/grapher/number-of-large-scale-ai-systems-released-per-year.csv?v=1&csvType=full&useColumnShortNames=false")Python with Pandas

import pandas as pd

import requests

# Fetch the data.

df = pd.read_csv("https://ourworldindata.org/grapher/number-of-large-scale-ai-systems-released-per-year.csv?v=1&csvType=full&useColumnShortNames=false", storage_options = {'User-Agent': 'Our World In Data data fetch/1.0'})

# Fetch the metadata

metadata = requests.get("https://ourworldindata.org/grapher/number-of-large-scale-ai-systems-released-per-year.metadata.json?v=1&csvType=full&useColumnShortNames=false").json()R

library(jsonlite)

# Fetch the data

df <- read.csv("https://ourworldindata.org/grapher/number-of-large-scale-ai-systems-released-per-year.csv?v=1&csvType=full&useColumnShortNames=false")

# Fetch the metadata

metadata <- fromJSON("https://ourworldindata.org/grapher/number-of-large-scale-ai-systems-released-per-year.metadata.json?v=1&csvType=full&useColumnShortNames=false")Stata

import delimited "https://ourworldindata.org/grapher/number-of-large-scale-ai-systems-released-per-year.csv?v=1&csvType=full&useColumnShortNames=false", encoding("utf-8") clear